Not a magic wand. A magnifier.

If your codebase is tight, the AI produces more tight software.

If your codebase is a mess, the AI accelerates you into a bigger mess.

If your feedback culture is painful...

If your company culture is tense...

People treat AI as a speed boost for their existing workflow instead of rethinking what their workflow should be.

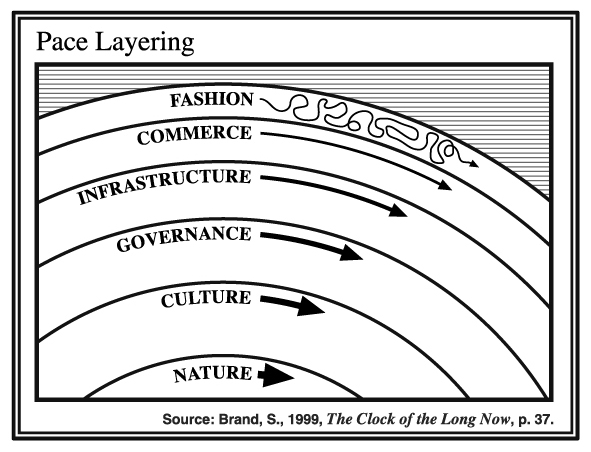

Not everything changes at the same speed.

Fashion moves fast. Infrastructure moves slow. Culture barely moves at all.

The fast layers innovate. The slow layers stabilise.

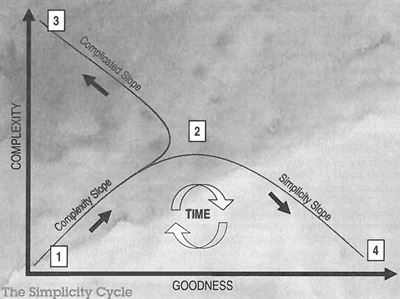

Adding complexity improves goodness — until it doesn't.

Past the peak, every addition makes things worse, not better.

The hard part: knowing when to stop adding and start removing.

Complexity is becoming cognitive debt.

The system grows more complex than anyone can hold in their head.

And it's not just you — your users and customers carry that complexity too.

Prediction: Feeling left behind and not in control will lead to stress, fights and burn-out.

The answer is simplicity, spring cleaning & proper documentation - not "rest"

“I have made this longer than usual because I have not had time to make it shorter.”

— Blaise Pascal

Simplicity is not where you start. It's where you arrive — after the effort of removing what doesn't belong.

The Simplicity Cycle — complexity helps, then hurts.

Pascal's insight — making things less complex takes significant effort.

AI is a magnifier — it amplifies whatever state your system is in.

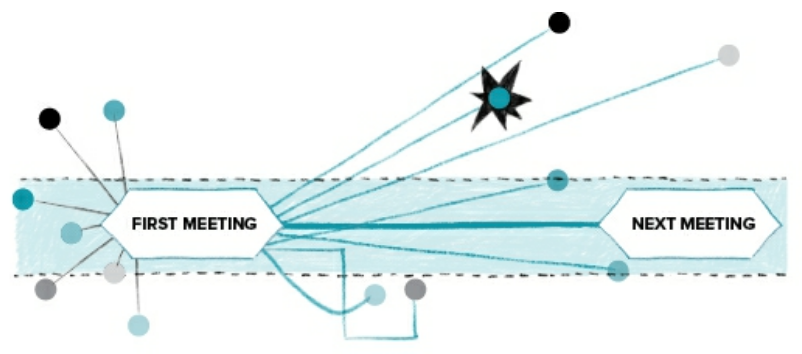

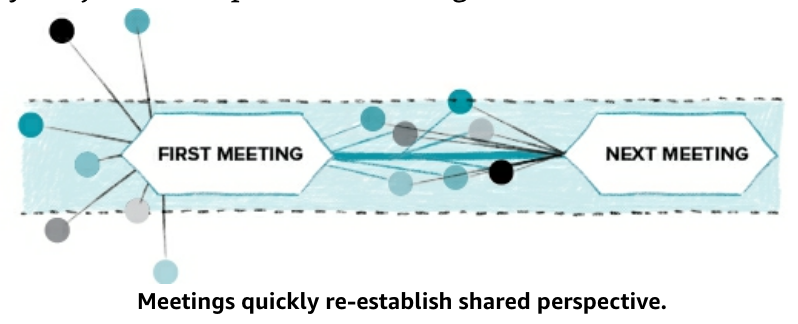

Every handover in the old production chain forced a conversation between different perspectives.

wireframe → design → front-end → back-end → QA

AI compresses the entire chain into one person, one session, hours instead of weeks.

The maker gains speed and coherence — but 5–6 quality conversations now happen zero times.

When you show a full prototype, everyone reacts on a different layer simultaneously.

Flow, UI, copy, UX, tech feasibility, edge cases — all at once.

The old process forced focus — you can't give colour feedback on a wireframe.

Now there's one artifact containing all layers, and meetings devolve into noise.

The prototype is done. But you reveal it in rising fidelity:

Engineering quality control doesn't disappear when AI writes code.

It migrates — to specs, tests, constraints, and risk management.

Pair programming, ensemble development, continuous integration.

These create the tight feedback loops that agent-assisted development requires.

“Your job is to deliver code you have proven to work.”

— Simon Willison

Untested AI-generated PRs are a dereliction of duty.

The job shifts from writing code to proving it works.

Read the post | Simon Willison's Blog — follow this man.

You need a test suite that operates on many different levels.

Unit tests, integration tests, smoke tests, end-to-end tests. The whole stack.

Your rate of change just went through the roof.

The amount of critical flaws found is sky-rocketing.

This makes us stronger long-term, but very vulnerable right now.

You have to layer your security properly. This is not optional.

Also not for business people that want to go fast.

Use one LLM to evaluate the output of another.

"Thinking" models drastically outperform standard models as judges.

Use it to evaluate: code quality, test coverage, PR descriptions, documentation completeness.

"AI Slop" — low-quality, mass-produced, algorithmically generated code that looks polished but lacks substance.

The Slop Jar Rule

Get caught committing untested, unverified AI-generated code three times and you're buying the team lunch.

Name it. Shame it. Don't ship it.

--dangerously skip permissions